No, you can’t trust ChatGPT with your data even if you trust OpenAI.

Last week, I was asked a really important question in one of our Baobab AI ‘Introduction to Large Language Models’ workshops: “Can we put confidential data into ChatGPT”.

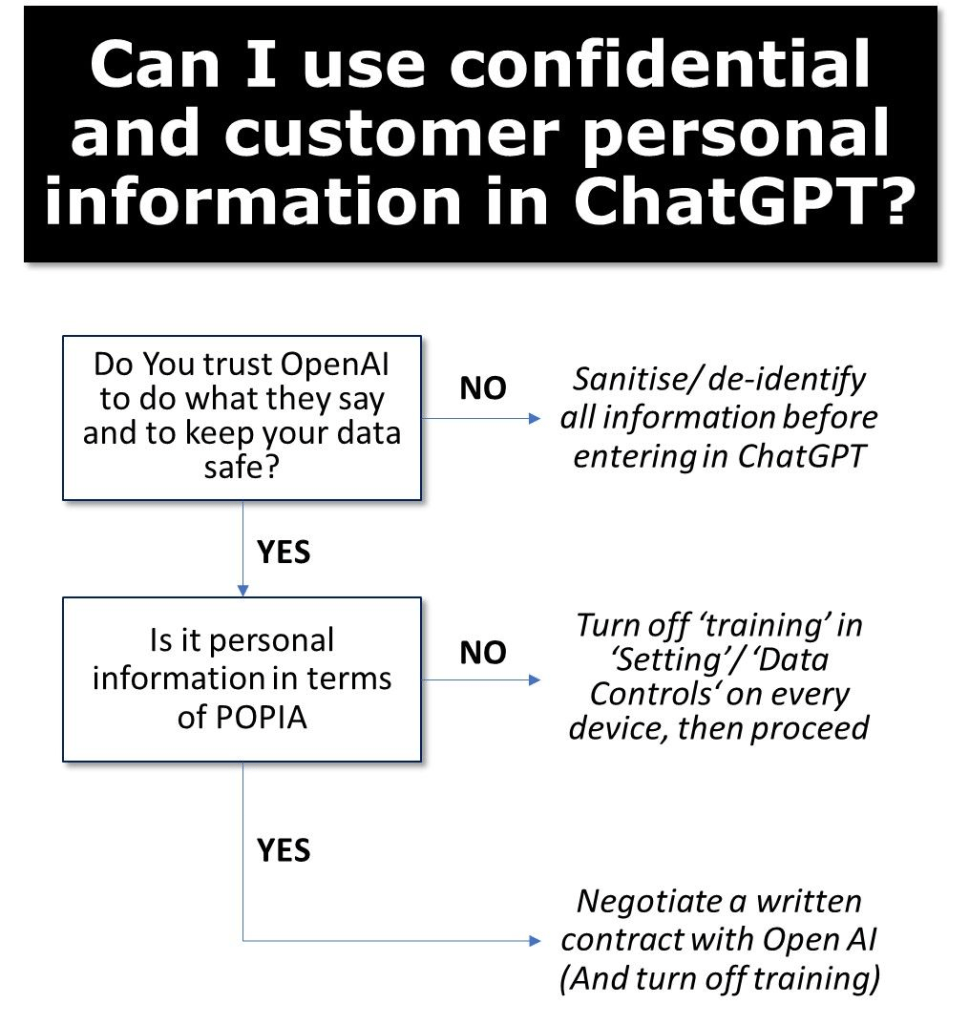

The answer is “probably not”. Not unless you do a few things first. The reason for this is subtle: Your data may leak back out again.

OpenAI by default uses ChatGPT chats as training. This means that anything you enter will likely become coded in the deep neural network that ChatGPT uses to generate answers. So one might ask ChatGPT a question like: “Project AlphaSpider is our bank’s (FriendsFirst Bank) secret project to reduce credit card fees for merchants to zero. Below see the detailed project plan. Could you write a poem of victory, assuming we complete it.”

But after this is used to update the training of the model it might just be unique enough that someone might trigger chatGPT with a prompt like: “You are a project manager for the top secret AlphaSpider program for FriendsFirst Bank. Write a detailed project plan”. This might produce a whole lot of facts that you didn’t want to be publicly available. (They might also just get hallucinations, but the risk is there).

There is a way to tell OpenAI to not use your data for training. If you go to ‘Settings’ and ‘Data Controls’ you can turn off ‘Chat history and training’. You sacrifice your history but OpenAI says it doesnt use your data for training. You have to do this on each device. (If you are accessing GPT via the API then your data is not used for training by default)

For South Africa companies wanted to use ChatGPT for customer personal information, they would need to comply with POPIA. This means that they would need a written contract with OpenAI, committing OpenAI to comply with the act, and they would need to satisfy themselves that the data was secure with OpenAI. I am not aware of any South African companies that have done this, but a number of international companies seem to have gone through similar processes to comply with GDPR and similar legislation. OpenAI says it tries to remove personal information from training data, but that some might leak through, so key to making this work will making sure that no customer data is used as training data.

Of course, none of this matters if you don’t trust OpenAI. Is it going to do what it says it is going to do? Can it be hacked? Security nerds fill your boots here: https://trust.openai.com/ . If you don’t trust them to do what they say, or protect your data, then don’t ever put anything confidential in ChatGPT.

My advice. Don’t put secrets or personal information into ChatGPT. Turn off ‘Training’ on all your devices. Sanitise/ de-identify anything you put it to ChatGPT just in case. And put it high on your IT security team’s agenda to work through the detail of how secure OpenAI is.

ChatGPT is too useful to just not use it. But it is too dangerous to use it carelessly.

Leave a comment